The Operating Stack

Layers that Operationalize Systems of Intelligence

Building with less resources can feel like a losing battle. You look over at your competitor who seems to have more of everything. However, this “more” may lack substance and distract from your core value proposition. That realization underpins our Constraint Advantage thesis:

“Scarcity-induced choices that improve unit economics or deepen defensibility faster than capital-rich rivals can replicate.”

In our previous post, we outlined the challenging decisions that African founders face on where to play and how to win. Through a short series, we now focus on Where You Build, How You Operate, and How You Distribute. This note addresses Where You Build using a taxonomy constructed from working with a wide range of founders across Africa.

Taxonomy: Where You Build

Systems of Intelligence (SOI), according to Geoffrey Moore, and later, Jerry Chen (Greylock – here & here), represents the decision layer that learns from enterprise data and improves work. The SOIs are connected to “data chests” roughly categorized as:

Systems of Record: data stores and process backbones e.g., CRM, HRMS or ERP.

Systems of Engagement: user facing channels that often link to systems of record e.g., email, Slack, WhatsApp, Tiktok.

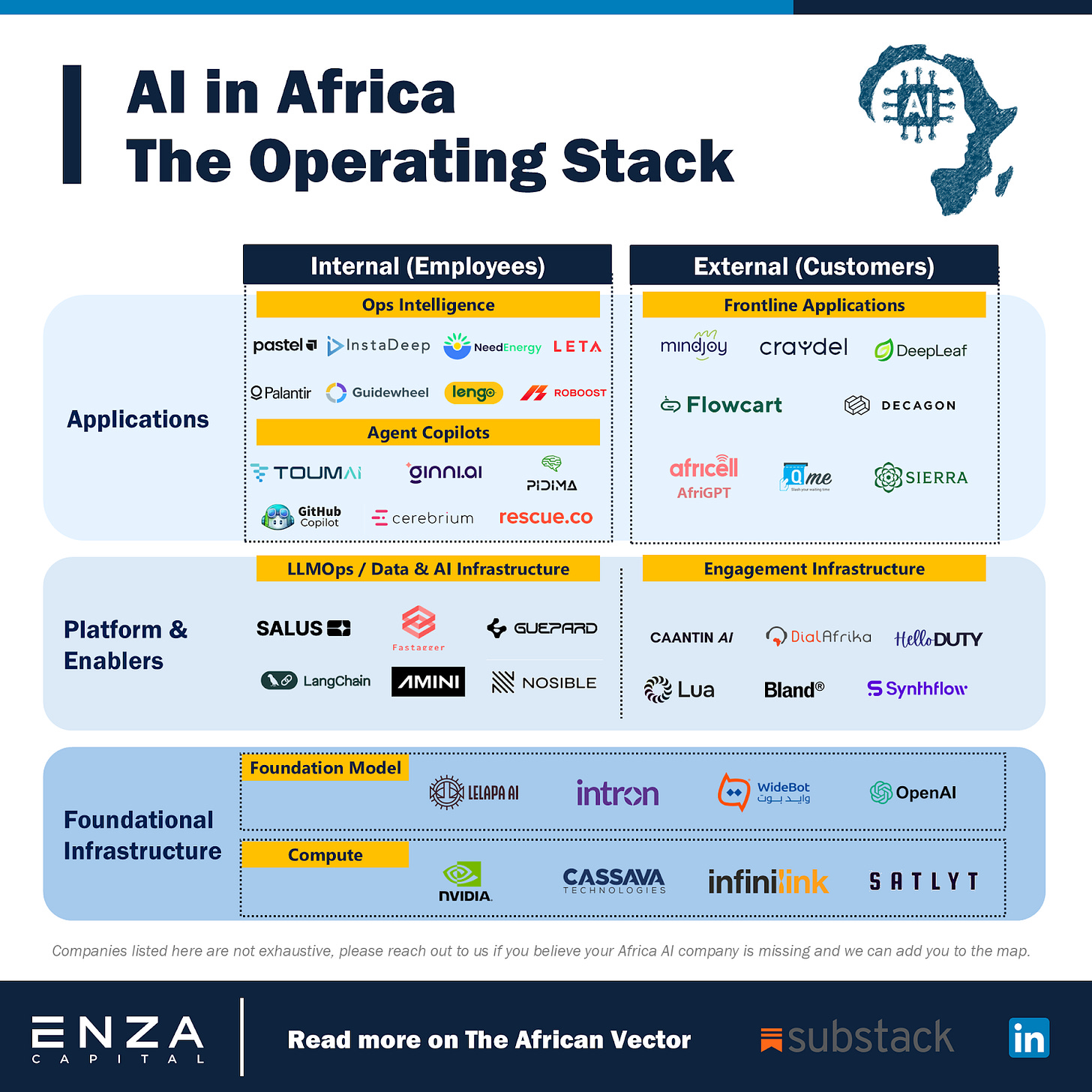

AI systems are Systems of Intelligence, learning from Systems of Record and acting through Systems of Engagement. We operationalize that idea in our B2B AI Operating Stack, where Foundational Infrastructure provides the core power, Platform & Enablers connect the data or model to the user, and Applications are the Systems of Intelligence in action.

B2B AI Operating Stack

Exhibit: Operating Stack - Layers that Operationalize Systems of Intelligence

We map African B2B AI companies building systems of intelligence into a practical hierarchy.

1. Foundational Infrastructure: This is the base layer of the stack, providing the core power for all systems built on top. It includes the foundation models and compute resources that are the building blocks of AI-native products. In this note we focus on foundation models. We bracket compute and associated physical infrastructure for a later post. Notable African foundation model providers are highlighted below; it should be mentioned that these players have productized their models and delivered them straight to the end user. Examples include:

Widebot building an Arabic dialect LLM powering customer communication chatbots for businesses and governments.

Lelapa AI building an LLM for African languages starting with isiZulu transcription services.

Intron building an LLM for transcription and voice agents across multiple languages and accents e.g., Pidgin English and Swahili.

2. Platform and Enablers: This layer supports the application workflows above it. These companies provide the essential tooling to build, evaluate, and securely deploy performant AI solutions, often as embedded tools or white-label services. The primary archetypes we observe in this category are:

LLMOps / Data & AI infrastructure e.g., Guepard, Salus Cloud: Tools to prepare and govern data, adapt and evaluate models, and serve them cost effectively.

Engagement Infrastructure e.g., HelloDuty, Lua: Platforms that aggregate data and access across customer engagement channels to deploy an optimal AI solution where users live.

3. Applications: This is the layer closest to the user and the brand they interact with most directly. We segment this by the end user:

Internal to the Org (Employees): Solutions designed to make teams faster, more precise and more effective.

Ops Intelligence: Provide deep insight to improve decision-making and task competency. These tools monitor systems, identify gaps, and suggest improvements. Examples include Pastel for monitoring financial fraud and Leta powering delivery and fleet management to improve logistics outcomes.

Agent Copilots: Help employees in task execution and ensuring quality. For example, Pidima, which helps manufacturers prepare compliance documentation, and Rescue, building an internal QA tool to accurately audit emergency calls for protocol adherence to improve call handling and dispatch procedures.

External to the Org (Customers): Solutions that drive behavioral change to enhance customer engagement, retention, and revenue; for example:

Each layer carries different capital and technical demands. The most intensive sit at the foundational layer with lengthy and costly R&D cycles that extend your time to market and validation of customer demand (cash earned). As you move up the stack you can test willingness to pay faster, and you should expect higher competitive pressure.

What will separate winners in the next 3 – 5 years is an operating rhythm of punctuated gains, capitalizing on technological step-changes, with consolidation of unit economics before the next technology inflection. We explore this in the next part of the series.